Why Your WordPress Blog Had a Spike in Direct Traffic

When you're looking at your Google Analytics traffic sources, you see that your traffic comes in a few different flavors. These are:

Search traffic. This is traffic that comes specifically from the search engine results pages. Google is smart enough to include search engines other than their own here, so search traffic is still considered search engine traffic even if it comes from Bing or DuckDuckGo or what have you. They're also smart enough to exclude traffic from search engine domains but not results pages. For example, traffic from Google's blog would not be listed as search traffic.

Referred traffic. This is traffic that comes from another source. If someone clicks on a link on Forbes and it leads to your page, that's referred traffic. Referred traffic includes social media links, blog links, paid advertising from display network sites, and so on.

Direct traffic. Direct traffic is traffic that does not have an origin point that Google analytics can track.

This comes up in a few different ways.

- The visitor typed your URL directly into their browser, visiting your page directly.

- The visitor clicked on a bookmark in their browser to open your page.

- The visitor landed on your site through a source that doesn't refer data, like an SMS message or a chat link.

Monitoring trends in where your traffic is coming from is a pretty important metric to watch. You can get some interesting insights from it. For example:

- A surge in search traffic can indicate that a new page of yours is ranking well, or other pages are ranking better than they were before. It can also indicate that a subject you're targeting and ranking for is suddenly more popular than it has been.

- A surge in referring traffic allows you to monitor certain kinds of outreach. For example, a surge in traffic from Facebook indicates that a post went viral, a paid campaign went into effect, or your account has grown more popular.

- A surge in direct traffic can indicate a few different things, both positive and negative.

Forgive me for being coy on that last one; that's what I'm here to talk about today. A spike in your direct traffic can have a few different meanings, largely dependent on context and your actions.

Key Takeaways

- Direct traffic in Google Analytics means visits with no trackable origin, including typed URLs, bookmarks, and untracked links.

- Legitimate spikes in direct traffic can result from print, radio, TV ads, or marketing channels that don't pass referral data.

- The most common cause of direct traffic spikes is bots, which can range from harmless scrapers to malicious DDoS attackers.

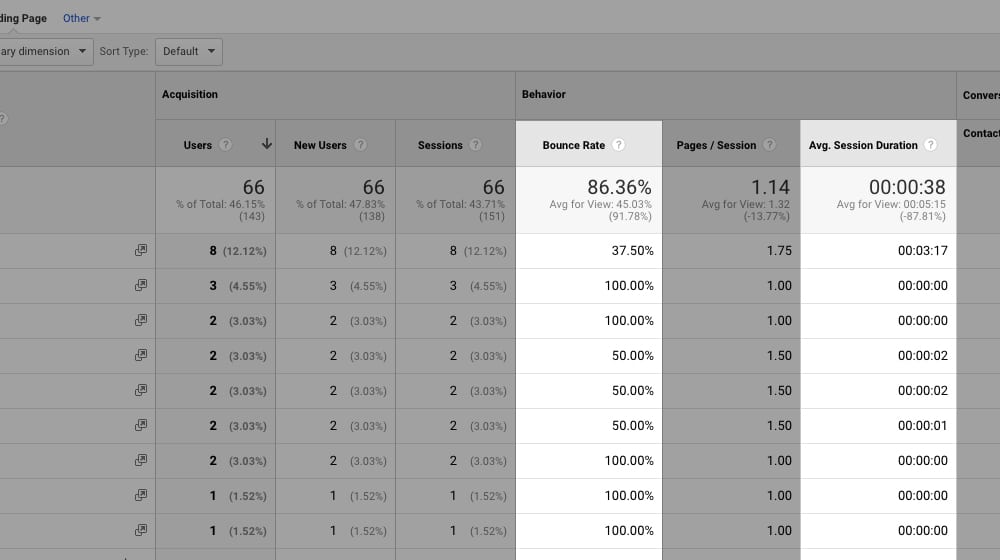

- You can identify bot traffic by checking for near-100% bounce rates, single-page visits, and similar IP address ranges.

- Solutions for bot traffic include using Cloudflare, blocking IPs via .htaccess, updating robots.txt, or filtering bot data in Google Analytics.

The Good Causes

There are a few situations where a spike in direct traffic can be a good thing. Typically, it means success in a marketing campaign through a non-standard medium.

The most common example of this is a print, radio, or TV ad. Any time where you're marketing your website to an audience through a channel that does not have clickable links, the traffic you get from it is going to (probably) be direct. Though, that depends a lot on how easy your URL is to remember and type.

People with short, easy to remember URLs will get a surge in direct traffic. People trying to use longer URLs, or who have brand names that might not be easy to remember, or who are using unmemorable short-links may find that people turn to Google to find a link, and their marketing efforts end up split between direct and search traffic. There's nothing wrong with that, it just means a surge in solely direct traffic is not likely this kind of traffic.

About as common is the traffic you get from marketing via channels that don't pass referral data. Instant messengers sometimes do this, though modern IM platforms do pass some referral data, or just tag all URLs automatically with some tracking data to make it identifiable. Still, links in some email marketing, SMS messages or chat messages can show up as direct traffic. So, if you're starting a new marketing campaign through one of these channels, you'll see a spike in direct traffic.

One of the most common causes of "direct" traffic that simply doesn't have referrer data paired to it is if your site isn't using HTTPS. A site using SSL will send traffic your way, but it won't send referral data to an unsecured site. The redirect from HTTPS to HTTP will strip that data, so the traffic looks like it's direct. If your site is using SSL, this won't be the case, but if you're not using it, this can happen in your analytics. If you suspect redirects are causing tracking issues, a redirect chain checker can help you identify the problem.

You will also often see a rise in direct traffic alongside rises in other kinds of traffic, specifically from people who browse with anti-tracking and anti-script extensions in their browsers. If the user is preventing their browser from reporting referral data, you won't get that referral data, so it will just be listed as direct traffic even if it wasn't. That's not a very high percentage of the population, though, so you don't really have to worry about it. It's worth noting that some unexplained traffic spikes have entirely different causes, such as bot or spam traffic from certain regions.

Unfortunately, that's about where the good times end. I would venture to say that 99% of the time you see a spike in direct traffic, it's not going to be because of a marketing plan you started.

The Bad Cause

One word: bots.

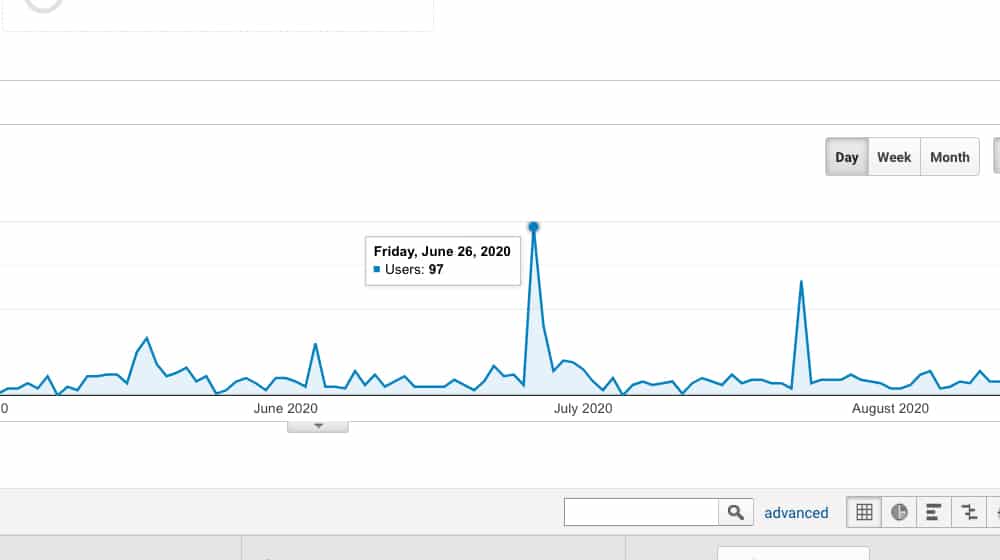

99% of the time, if you're seeing a spike in direct traffic, it's because of bots hitting your site.

Now, this isn't always something that necessitates immediate action. Bots aren't a good source of traffic, of course. They don't click your ads, they don't buy your products, and they don't promote your content. Some bots might be scraping your site for a search engine, and some might even be intentionally used to scrape data for your own use. Others, though, might be more malicious, looking for security flaws or attempting to DDoS your server. Server resources can become strained when malicious bots put excessive load on your site.

You can identify whether or not your visitors are bots versus real users in a few different ways.

- Did you start a particular marketing effort just before the spike? Maybe not bots, but might be bots that discovered your posts.

- Are the hits all visiting one page on average and not clicking through links or visiting other pages? Most likely bots.

- Is the bounce rate at or near 100%? Pretty definitely bots.

- Is the source IP address different but similar between them, coming from the same blocks or ranges? Most likely bots running on a list of proxies.

So what do you do if this happens? Two things: figure out why it's happening, and decide if you need to do anything about it. A thin content detector can also help you identify pages that may be attracting unwanted bot traffic due to low-quality signals.

Identifying the Cause of New Bots

If you're pretty sure you're getting a bunch of bots hitting your site all at once, you should take some time to figure out what happened and where they're coming from. Here are some questions you can ask and answer.

Is there anything unique about the pages the bots are hitting, or are they spread evenly across your site? For example, if the bots are all hitting a single page, it's possible that it's all referral traffic from some site that linked to you but is being hammered by bots themselves, some of which follow links. If the bots are spread out across your whole site, it might be a scraper, either a bad one looking to steal your content or someone using a tool like Screaming Frog to pull and analyze your site data.

One thing to watch out for here is if the traffic is hitting pages like your admin.php page and other system pages; these are bots that are probably trying to spam your login page looking to brute force your admin account or are looking for security holes they can exploit to compromise your site in some way.

Did you do anything that would initiate a string of bots hitting your site? The aforementioned Screaming Frog can be a culprit here. A lot of SEO-based tools that scan your site do so using bots, and not all of those bots are filtered or report themselves appropriately, so they'll show up as direct traffic. If you did something that would have initiated the bots, at least you know that it's probably not a problem.

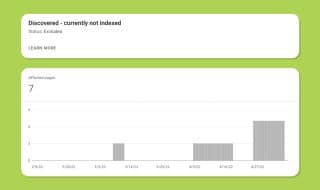

Another potential cause here is submitting a sitemap or a site link to a search engine that uses bots to crawl sites. Google used to do this, but Google will also filter itself out of Google Analytics. Other search engines still use bot-based scraping technology to build their indexes, and it's not always filtered properly.

Are the bots doing something harmful? This goes hand in hand with the targeted versus broad answer to the first question, but you can look for other hallmarks. For example, are the bots landing on a page once and leaving, or are they hitting it over and over again? Does it look like they're trying to load heavy media or spam particularly weak parts of your site like it's potentially trying to DDoS you? Simple traffic doesn't matter as much as bots that are trying to accomplish something if you let them.

All of this helps you narrow down whether or not the bot visits are a problem, or if it's something you can just ignore.

How to Deal with a Bot Problem

Once you've answered the questions above, you've likely made one key determination: whether or not the bots are a problem. If not, I can help.

If the bots were a one-time pass and aren't continually hitting your site, you can pretty much just ignore it. On the other hand, if the bots come back time and again, you might want to do something about it.

If the bots appear to be attached to some kind of web service with a legitimate reason to be scraping your site, you can just let them do their thing. On the other hand, if they appear to be attached to a malicious scraper or spammer, you should take action.

And, of course, if they're there due to something you did and you just forgot you ran Screaming Frog all night last week, then you can safely ignore them.

If you've decided that you want to do something about the bots, there are some actions you can take.

Option 1: Use a service like Cloudflare to filter your traffic. Cloudflare's DDoS protection is just one of the many services they offer. Among their other services is the ability to identify and preemptively block traffic coming from bots. They have their own resources that can compare traffic patterns, and they have known lists of botnets and compromised machines they can use to just filter traffic before it ever reaches you.

The downside to using a system like this is, well, you have to use a third-party system like Cloudflare. I have nothing in particular against Cloudflare, and in fact, I recommend them to help secure your content, but it is an additional expense you'll need to consider. The free plan is pretty limited, so you'd end up paying at least $20/mo for their Pro plan.

Option 2: Block the offending IP addresses and user agent strings at the server level. If all of the bots have the same IP and user agent, you can edit your robots.txt file to prevent them from accessing your site. You can limit a bot's ability to see specific pages, specific subfolders, or just your whole domain. Google has a detailed rundown of how to manage the robots.txt file over here.

The problem with this option is that some bots, particularly bots from spammers and DDoS attacks, are just going to ignore your robots.txt file. It's a directive that bots have to obey to be considered good bots, but bad bots don't care about it. If you're concerned about how AI crawlers specifically interact with your site, you may want to use an AI.txt and robots.json checker to manage those directives as well.

Option 3: Block the offending bots with .htaccess. Your .htaccess file is a configuration file as part of apache web servers, which means it's only available for some of you reading this. It's a slightly complicated method, to block a bot this way, and it only works if the bots have specific IP addresses. If they change their IP addresses, you'd either have to block a whole segment of the IP range or play whack-a-mole and hope you eventually get the whole list.

Incidentally, be very careful blocking whole IP ranges. Normal users could be in those ranges, and you don't want to do something like block a major IP range used by ISPs in China when one of your biggest customers is in China, for example. I've known people who had this personally happen to them.

This page includes instructions on how to use the .htaccess file to block bots.

Option 4: Just ignore the bots. Unless you're being targeted by a dedicated denial of service attack, most of the time, bots aren't going to be a problem. There will always be roving spambots looking for unsecured admin pages using default passwords, or comment fields unprotected by captchas, and you'll never be able to block them all. You can do things like limit login attempts and use spam filters to manage the problem instead.

The only problem that bot spam like this presents is that it can muck up your visitor tracking data. Seeing a spike in traffic when there wasn't actually a spike in real traffic is annoying from a data analysis point of view. You can't accurately monitor your traffic trends with spikes and surges like that, after all - how are you supposed to differentiate the bots from the humans?

Dave Buesing covers a couple of Google Analytics strategies on his blog that you can use to filter out the bot data in Google analytics. It doesn't block the bots at all, but what it does do is gives you your traffic data without the bot data. As long as you can ignore the bots on your site, and they don't pose a threat to your page, filtering them from your traffic data is a good idea. It will give you a more accurate picture of how many actual humans have visited your site, not software clicks and scraping programs. If those inflated numbers are making you second-guess which posts are actually performing, it may even influence decisions like whether to delete posts that appear to have zero real visitors.

Have you had to deal with a spike in bot traffic like this before? If so, how did you handle it?

September 08, 2020

Hi James! I am pretty new with marketing and all this stuff so I just want to check what DDoS means? Also, do you have an article on how to filter bots in Analytics? Thank you so much and hope you continue posting articles like this.

September 08, 2020

Hi Chai! Thanks for commenting.

A DDoS is a distributed denial of service attack. It's one of the most common and easiest to perform attacks designed to overload a website. You're essentially occupying all available "seats" of your website with fake traffic, so that the real traffic can't get through. This causes your website to stop loading, and can stop your website from working until the attack is mitigated.

There are a number of ways to prevent a DDoS attack - a DNS-level protection service like CloudFlare to protect your origin IP and stop bad visitors at the DNS level, a properly-configured software firewall, and a hardware firewall, to name the most common.

We don't have an article solely on filtering bot traffic, but we linked a guide at the bottom of this post on how to do this. Please let me know if it helped you 🙂

October 25, 2020

When I experienced the spike in traffic, I immediately check and conclude its from bots. I tried the free plan of Cloudfare for a week but subscribed for the paid plan. It might be additional expense but it helped me a lot.

October 26, 2020

Hi Cathleen!

Not surprising - Google just removed the ability to filter bot traffic as well recently, so Cloudflare is a decent option. It will cut back on a lot of low quality visitors. You may notice your overall traffic dip a bit if they are being blocked, but that is to be expected.

You can also set up custom rules on pages that are getting hit harder than others by bot traffic, if you wanted to give them a JavaScript challenge or something that only a human can solve.

January 30, 2022

Huh, that makes sense! I was taken aback by the sudden spike in traffic we got a few days back. Bots are probably the issue.

February 04, 2022

Thanks, Dax!