When Is It Bad for Your SEO to Have "Too Many Pages"?

SEO is a constant push and pull of different factors and influences.

One of the biggest points of contention I often see marketers encounter is with content quantity. It's easy to see where it comes from, too.

On one hand, you have all of the pressure to build a site. Small sites have trouble ranking. Every page you publish is an opportunity to target different keywords and reach a different chunk of your audience. You can't attract attention if you don't have content to do it.

On the other hand, too much becomes unwieldy. With thousands of pages or more, you can never keep them all up to date. Old content slips from the rankings. Traffic drops. Users have a hard time finding what they want to see.

But, where's the tipping point?

In my experience, there's no such thing. There are some websites that rank very well with a few dozen pages or a few hundred. Microsites centered around trending topics can have fewer than ten pages and still dominate their niche. On the other hand, decades-old sites that have been publishing 10+ new posts per day have potentially hundreds of thousands, if not millions, of pages, and they still do fine.

Google, too, doesn't penalize a site for being too big.

It's clear, though, that you can't just pump out thousands of pages and hit the big time. There are new examples every year of a site that tries that, only to drop out of the ranks entirely after a few months.

I wanted to dig into this issue because it's honestly fascinating. I obviously want to sell as much content as I can (I'm a content marketer, after all!), but I also want my clients to grow in the long term, not see a brief spike and drop-off that leaves them worse off.

Not to toot my own horn, but I've been pretty successful at keeping those long-term gains going, and a big part of it comes down to understanding why "more content" isn't always better.

It's about quality, purpose, and uniqueness. It's about value. It's about knowing what your users want and satisfying those needs, not just checking off a list of keywords.

Key Takeaways

- Google doesn't penalize sites for having too many pages; quality, purpose, and uniqueness matter far more than volume.

- The Pareto Principle applies to content: 80% of traffic typically comes from just 20% of your pages.

- Low-performing pages can still provide indirect value through thought leadership, internal linking, cluster coverage, and AI citation opportunities.

- Real problems arise from low-quality content, keyword cannibalization, algorithmically similar pages, unnecessary indexed pages, and poor site navigation.

- Rapidly scaling AI-generated content may produce short-term spikes but typically leads to ranking drops once Google fully evaluates the site.

Not All Content Will Perform

Before even getting into the bulk of the discussion, I want to remind you all that there's a very powerful principle in play that you need to use to set your expectations: the Pareto Principle.

The Pareto Principle, also known as the 80/20 rule, is a pretty well-documented fact about how systems work. It was originally described in economics, but it turns out it applies much more broadly.

The general idea is that 80% of your results are going to come from 20% of your efforts. If you buy and sell stocks, 80% of your profits will come from 20% of your trades. If you run a large company, 80% of your labor will likely come from 20% of your workforce. 80% of your return on investment from paid advertising will generally come from 20% of your ads.

Shockingly, this is true for blogs as well. On your website, 80% of your sales will come from 20% of your landing pages, and 80% of your traffic will come from 20% of your blog posts.

Now, if you're thinking "but this doesn't line up with my metrics!" you're probably right. It's not like this is a hard-and-fast rule; it's just a statistical commonality. In fact, for smaller sites, I've found that the numbers are even more skewed. I have one test site that gets 95% of its traffic from 1% of its pages, for example.

The point I'm trying to make here is this: a lot of the posts you publish won't be raking in the traffic.

But that's not a bad thing.

The trick is knowing how to find value in pages that aren't getting traffic or clicks. Content you publish can help you in ways you might not expect, and might not be reflected in your analytics.

Underperforming Content Isn't Necessarily Bad

I've talked to a lot of site owners, and even some marketers, who view any blog post that isn't pulling in traffic as a liability. They act like they have a finite number of pages they can publish, and any post that isn't performing is a waste.

It's not like websites have a cap!

Content that doesn't perform well isn't necessarily bad, because you can get a lot of implicit or secondary value from those pages. For example:

- Posts that feed into a cluster can showcase your robust coverage of a cluster's topic, even if people are only mainly interested in the pillar post.

- Posts that cover topics in depth can build thought leadership even if they aren't bringing in clicks directly.

- Posts that provide great information can be cited in AI overviews, even if few people click through to those citations.

- Posts that cover basic-level topics are kind of like an entry fee to show you know what you're talking about, even if your audience already knows as well.

- Posts that cover niche topics might not be relevant now, but could come into focus down the road when the industry changes around you.

- Posts with good information can be excellent targets for citation links, even if relatively few people click through.

As long as the content isn't terrible, it's not hurting you to have it around.

How Crawl Budget Affects Indexation and Ranking

One thing I often see people bring up as a refutation to what I just wrote is that sites do have a "cap" in a sense: Google's crawl budget.

Google is a massive company with incomprehensible amounts of processing power, but they still have limits. They have to decide what to index and what to ignore. One of the simplest ways they start making that decision is by allotting each site a budget of crawling before their bot moves on.

If you publish 100 pages on your site but Google only gives you a crawl budget of 10 per day, it would take 10 days for all of those pages to be crawled and indexed, at best.

But this is all based on a few misconceptions.

First of all, crawl budget isn't a limit on pages, exactly. It's also based on time. The faster your pages load, the more pages Google can crawl in a trip, so page load speed actually increases effective crawl budget.

Crawl budget is also all about indexation. Once a page is in Google's index, it doesn't need to be re-crawled very often; only when Google thinks they need to re-check the page, which can be guided by "last updated" info in your sitemap.

That means crawl budget is only a concern if you're publishing a lot of content rapidly, or need a large swath of your site re-indexed, such as after a penalty. For a normal site with a normal publication schedule, it's not going to be a concern. There are, of course, many other reasons your content may not be showing as indexed that have nothing to do with crawl budget.

Crawl budget can be optimized, of course. Brian Dean has a great guide on it here. It's mostly stuff I've been telling people for years: robust internal linking, external linking, clustered content, and so on.

How Some AI-First Strategies Are Hurting Your Site

Much like everything else in the world, AI has changed this discussion, too.

In particular, I've been seeing a lot of marketers using AI in a lot of different ways, and some of those ways are in conflict with long-standing SEO rules. I'm still of the opinion that a reckoning is coming, though I'm less optimistic than I was a year or so ago.

AI has one significant advantage, which is speed. It's able to pump out acceptable-looking content in a matter of minutes, and there are a lot of site owners out there who have been generating dozens or hundreds of posts per day in an attempt to win on sheer volume.

A lot of these sites make it big for about a month or so, and then they disappear. It's pretty clear why, too; they never generate good content, just words on a page that look vaguely correct. They explode when they're created because the full algorithm hasn't dug deep into them yet, but they rapidly dry up when it turns out they don't have anything original to say.

This is the common story with "black hat" techniques, and it's a tale that goes back decades. PBNs, link schemes, paying for metrics, all of these strategies have worked for a short while before drying up and leaving sites dead in the water.

AI is just a little longer-lived with this kind of usage because it's less obvious that it's being exploited. Ironically, though, the more content that is pumped out, the more obvious it becomes.

Obviously, there's a ton of AI-generated content out there that does fine. I'm really just talking about the equivalent of article-spinning and spam.

What I find more interesting is the strategies using AI, not for generation, but for discovery. AI Overviews, Perplexity, ChatGPT answers, and other AI-based discovery tools pull from the live internet and (mostly) give citations to the sources of their information, now.

That means a growing strategy is to target those citations, instead of just raw search ranking. Fewer people click those links than links in the search results, but just having your name and logo there can be valuable even for the people who don't click through. It's part of brand building and thought leadership.

Unfortunately, this has led to a lot of site owners making short-ish pages that cover narrow topics solely to be cited in the AI Overviews and other LLM discovery channels. That might be fine for targeting the AI, but it's almost definitely going to be considered thin content.

The low-hanging fruit here is a glossary. I've seen a ton of site owners spin up glossaries with maybe 50 or 100 of the common industry terms, all on their own pages. That might look great to an info-searching LLM (though they're still probably going to reference Wikipedia or Dictionary.com instead of you), but it has the potential to be absolutely deadly in terms of thin content penalties.

Glossaries that expand into knowledge bases are better, and it's possible to do single-page or paginated glossaries that work a lot better, too, but it's also very easy to do wrong.

A point I really need to hammer home here:

AI Overviews are inseparable from traditional SEO.

A ton of AI overview citations are just pulled from the top-ranking pages for those queries. If you're chasing AI citations at the expense of the SEO metrics that get you ranked, you're going to lose both. If you want to get your site mentioned in ChatGPT answers and similar tools, the foundation is still strong, well-ranking content.

Why Too Many Pages Hurts Sites (And How to Fix the Problems)

So far, I've mostly been talking about how there's no such thing as "too many pages" and that it's not going to hurt you to publish more. But that's not entirely true. There are ways that having too many pages CAN hurt your site. It's just not a matter of volume alone; it's about quality, value, and usefulness. So, I put together the main reasons you might encounter why too many pages can hurt, and how you can fix them.

Reason 1: Many of the pages are low-quality or low-value.

This one is a perennial issue: you have too many pages, and they all suck.

The bar here is really, really low, though.

Just look at any news site. Those sites have years or decades of archives of coverage, a lot of which is probably 100-200 words of "breaking news" that has no real detail or utility. If the presence of that content hurt a site, no news site would exist today, or at least no archive would.

You really have to have a lot of content that pretends to be worthwhile, but isn't, to run into thin content penalties. We're talking about having 10 different pages, all with under 500 words, all covering the same topics and the same keywords. We're talking exceptionally valueless pages. Pages that have words but say nothing.

How to fix it: Just make sure your content is high quality. Every post needs to have a purpose, not just a keyword to target. Why are you creating that page, who is looking for the content you're providing, and why should they choose you over someone else? If you can answer those three questions, it's a good page to have.

I have a whole guide on how to audit your content and determine if old, low-value content is actually holding you back or not. Give that a look for more information.

Reason 2: Pages start to significantly overlap and fail to provide unique value.

Another reason why having too many pages can hurt you is when those pages significantly overlap. If you have two, three, four, or even more pages that are all substantively identical, why have them all?

People usually call this keyword cannibalization. The idea is that when a user searches for a keyword, Google is going to give them 10-ish results to pick from. You might have four options, but you're only ever going to get one slot. Only one of those pages will rank. Why have the other three?

I think calling it keyword cannibalization is a little reductive. You can target the same keyword with several pages, and all of those pages can target a different search intent, and thus can all be unique enough to rank for different people. You can't just make a list of all of your pages and their primary keyword, and delete all but the top-performing page for each keyword.

How to fix it: A content audit. You need to have a deep awareness of what the pages on your site are doing, and prune out, combine, merge, redirect, or otherwise aggregate value into one post for each unique combination of keyword, intent, and value.

Most sites aren't going to be in a position where they need to cut out hundreds of posts, but sites with very deep old archives of evergreen content, as well as sites with recent AI-generated explosions of content, are more likely to fall into this category. If you're unsure whether to keep or cut a post, deleting posts with zero visitors is worth thinking through carefully.

Reason 3: Similar pages can look like algorithmic content.

Another issue with having a lot of pages is that pages can start to look algorithmically-generated or AI-created, even if they aren't. I've seen this a lot in particular with local business sites, especially with franchises. When you have a ton of pages that are technically distinct, but only have a difference in a city name, you'll find that none of them are going to perform all that well.

This is another case where the bar is quite low, and you can get away with a lot these days. A handful of similar pages might mean those pages aren't going to perform at their peak, but it's not going to tank your site. It's only when you're getting into the hundreds of similar, generative-looking pages that you encounter major problems.

How to fix it: Make your pages distinct. Play up the differences between them. This is really based on vibes as much as anything else, so just try to make sure each page on your site has a unique focus and emphasis. If you're struggling with location pages that aren't ranking, there are specific steps you can take to differentiate them.

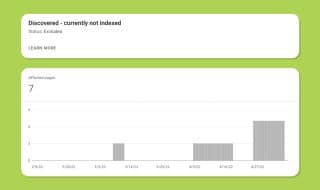

Reason 4: Many of the pages might not need to be visible or indexed.

The most technical of the reasons why having too many pages is going to hurt you is that they don't need to exist in the first place.

I've seen this come up in a lot of different ways.

- WordPress attachment pages, tag pages, and other system pages that end up visible when they shouldn't be.

- Faceted navigation that indexes all the different combinations of facets instead of canonical URLs for the end pages.

- Secondary pages for franchises that have identical content to one another, when only a core main page needs to exist for most of the results.

Sometimes this is a core function of the CMS you're using, sometimes it's a bug, sometimes it's intentional but a bad idea.

How to fix it: A site audit, focused on indexation. You can check your Search Console to see what pages are indexed, and look for pages that shouldn't be visible. You can use scraping tools like Screaming Frog or Greenflare to do your own checks, too.

The exact solution depends on what you find. Pages that are indexed when they shouldn't be, like system pages, should be blocked at the robots.txt level. Faceted navigation can be canonicalized to remove most of the problems. You might even be able to disable features in your CMS to hide or get rid of pages you don't need.

One of the harder-to-diagnose reasons why too many pages can hurt your site is that your navigation suffers for it. Navigation is key to helping users find what they want to locate on your site. If you have thousands of pages, just combing through them all becomes a huge chore.

On top of that, users broadly don't trust site searches over more typical Google searches or, now, asking for AI summaries. So, if they can't find what they want within a click or two, they're probably just going to look elsewhere.

How to fix it: Improve your site structure and navigation. You can do this in a ton of ways:

- Add breadcrumbs.

- Build out content clusters with robust internal linking.

- Use better organizational structures for your categories, primary topics, and subtopics.

- Cut back on the number of things you have in main menus.

Ideally, people should never be more than 3-4 clicks away from something they want to find. The more they have to dig, the more they just won't bother.

Easing the Fears: Too Much Content is Rarely an Issue

Overall, I think there's less of a problem with having too much content on a website than many people fear. As long as the content you publish serves a purpose and it's not overlapping with other pages for the same purpose, it's fine to have it.

Just remember to focus your efforts. Since 80% of your results are coming from 20% of your pages, you need to focus on making sure the top 20% of your site is operating at peak efficiency.

At the same time, avoid rapid scaling for no reason, especially with algorithmic or generative content. Figure out what your readers want to see, not what you think the AIs and search engines want to have. That's how you succeed with any site, whether you have 10 pages, 100 pages, or 10,000 pages.

Comments